People often assume AI advances by adding more hardware.

More GPUs.

More data centers.

More power.

More scale.

For years, that assumption appeared true.

But physical systems are beginning to push back.

Across multiple countries, electrical grids are showing strain. Data centers are becoming harder to place. Energy demand is becoming part of the AI conversation. This is not just a technology story anymore.

It is becoming an infrastructure story.

And that changes the direction of the field.

The Old Model

The previous generation of AI thinking focused on centralized expansion.

The assumption was simple:

intelligence grows by increasing computation indefinitely.

That produced enormous hyperscale systems capable of remarkable results. But it also created a problem.

Some systems process enormous amounts of data, but still overlook simpler ways to become more efficient, more selective, and more intelligent.

That is the risk of the old model: it can become like an elephant working inside a glass shop.

Powerful, impressive, and capable of moving almost anything — but not always sensitive to what is fragile, local, or already under pressure.

AI infrastructure cannot only be judged by how much it can process.

It also has to be judged by how carefully it uses energy, memory, context, and attention.

The Human Systems Problem

When a system grows too large, it can begin to lose sensitivity.

A power grid does not care that a data center is innovative if the local infrastructure cannot support the load.

A community does not experience “AI progress” as an abstract achievement if it arrives as higher energy pressure, land pressure, water pressure, or institutional strain.

This is where the human-systems lens matters.

Technology does not exist outside the world.

It sits inside electrical systems, economic systems, local communities, environmental limits, and human nervous systems.

If one layer expands without paying attention to the others, the whole system starts to distort.

A human nervous system works differently.

It does not process everything at maximum force all the time.

It filters.

It prioritizes.

It remembers.

It ignores noise.

It notices patterns.

It spends energy only where energy is needed.

That is what intelligence looks like in living systems.

Not endless processing.

Selective response.

The Small Build That Changed My Thinking

This became real for me while testing the Guardian system for Empathium.

My current Guardian build is not running on a supercomputer.

It is not sitting inside a massive data center.

It is not burning through expensive compute every time it responds.

It is local, small, and deliberately modest.

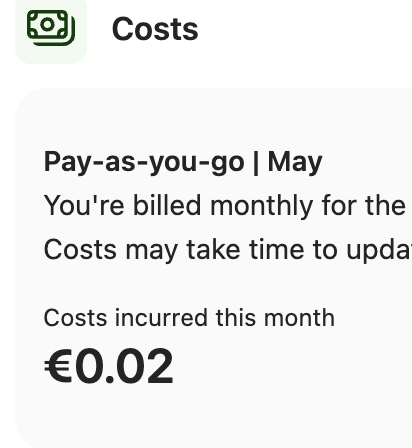

The memory system runs on one of the lowest-cost server tiers available, costing only a few euros per month. The retrieval layer uses vectors to find the most relevant meaning-space instead of searching everything blindly. The cost of testing has been measured in cents, not hundreds or thousands of euros.

That matters because it shows a different direction.

Useful AI does not always need to become larger, heavier, and more energy-hungry.

Sometimes it needs to become better organized.

A small system with clean memory, good boundaries, and selective retrieval can do meaningful work without acting like every question requires a supercomputer.

Early Guardian testing has not required supercomputer-scale infrastructure. In one current pay-as-you-go billing view, the monthly cost shown is only €0.02. That number may change as testing grows, but the signal is important: lightweight Guardian architecture can begin from extremely low-cost computation.

What the Guardian Is Teaching Me

The Guardian is not meant to become a giant centralized intelligence that consumes more and more data forever.

It is meant to support human autonomy.

It uses memory carefully.

It retrieves context only when useful.

It works with structured signals.

It does not need to process everything every time.

That changed how I think about AI infrastructure.

The future does not have to be only larger models, larger data centers, and larger electrical loads.

Some forms of intelligence may come from better memory structure, cleaner retrieval, smaller context windows, and systems that know when not to process more than they need.

That is not a small technical detail.

It is a different philosophy of intelligence.

Vectors Make This Easier to Understand

A vector is not magic.

A simple way to think about it is this:

A vector gives meaning a position.

If I write about power grids, energy strain, data centers, and smarter software, those ideas begin to sit near each other in a kind of meaning-space.

If I write about nervous systems, attention, memory, and human overload, those ideas form another cluster.

When the Guardian searches memory, it does not need to read everything from the beginning.

It can look for the region of meaning that is most relevant to the current question.

That is more like walking toward the right shelf in a library than dumping the whole library onto the floor.

This is why vectors matter for human systems.

They allow memory to become structured.

They allow patterns to become visible.

They allow AI to work with context instead of just volume.

Better Intelligence Is Not Always Bigger Intelligence

The mistake is assuming that intelligence always grows by adding more.

More data.

More processing.

More infrastructure.

More extraction.

But living intelligence often works the opposite way.

It becomes intelligent by reducing noise.

It learns what to ignore.

It learns what matters.

It learns where to place attention.

That is the shift I keep seeing in the Guardian work.

The system becomes more useful when the memory is cleaner, the retrieval is more focused, and the response is shaped by the actual context.

It does not need to swallow everything.

It needs to orient well.

The Reframe

The next phase of AI may not be only about building larger systems.

It may also be about building more sensitive systems.

Systems that use less energy.

Systems that retrieve better context.

Systems that understand boundaries.

Systems that know when not to process more.

Systems that support people without overwhelming infrastructure.

That is the shift.

From more computation to better orientation.

From scale alone to structure.

From data hunger to contextual intelligence.

Why This Matters

If AI keeps expanding only through brute-force infrastructure, it will keep colliding with physical limits.

Energy grids will push back.

Local systems will push back.

Communities will push back.

Costs will push back.

But if AI becomes more selective, more local, and more memory-aware, then the future looks different.

A Guardian-style system does not need to become a supercomputer for every human task.

It can become a careful companion layer.

A system that helps organize memory, detect patterns, reduce noise, and support better decisions without demanding endless infrastructure behind every interaction.

That is a more human direction.

Guardian Signal

The signal is not that AI must stop growing.

The signal is that growth needs a better shape.

The human brain is powerful because it is efficient, adaptive, and selective. It does not solve every problem by using maximum energy.

AI systems need to learn from that.

The future of intelligence may not belong only to the biggest data centers.

It may belong to systems that know how to use less, remember better, and respond with care.

Key Insights

- AI infrastructure is becoming a physical systems issue, not just a software issue.

- More computation does not automatically mean better intelligence.

- Human systems become strained when technology expands without local sensitivity.

- Vectors help AI retrieve meaning instead of processing everything at once.

- Guardian-style systems point toward smaller, more efficient, more context-aware intelligence.

- A small local system can still do meaningful work when memory, retrieval, and boundaries are well designed.

- The next AI shift may be from brute-force scale to selective, nervous-system-like design.

Why Efficiency Changes the Business Model

This also changes the question of access.

If a useful Guardian-style system can run on small infrastructure, then rollout does not have to depend on massive advertising models, surveillance economics, or big-company backing.

That matters.

Many digital systems become extractive because they are expensive to operate. When the infrastructure cost is high, the pressure to monetize attention, collect data, sell behavior, or lock users into a platform becomes stronger.

But if the system is efficient enough, the economics change.

A small, local, low-cost Guardian layer could potentially be offered at very low cost, or even free in some contexts, because it does not need to turn the user into the product.

That is not just a technical advantage.

It is an ethical design opening.

Lower infrastructure cost means more room for sovereignty, privacy, autonomy, and public benefit.

The less the system needs to consume, the less pressure there is to make humans consumable.