Opening

People assume their decisions are their own.

They believe they observe, evaluate, and choose independently.

But many decisions do not begin inside the person.

They begin with what has already been accepted as true.

Once a belief is accepted, authority can step in. Once authority is accepted, influence becomes easier. Once influence becomes normal, reality no longer has to be tested directly.

It only has to be approved by the system around the person.

That is how people lose contact with reality without noticing it.

They do not wake up one day and decide to stop thinking.

They slowly hand judgment over to something outside themselves.

Break the Assumption

The common belief is:

“People believe things because they have examined the evidence.”

That is sometimes true.

But in many human systems, people believe things because the belief has been reinforced by authority, identity, fear, belonging, repetition, or emotional need.

The mind does not only ask, “Is this true?”

It also asks:

- Will I still belong if I question this?

- Will I be punished if I disagree?

- Will I lose my identity if this belief breaks?

- Does the authority figure seem confident?

- Does everyone around me act as if this is obvious?

When those pressures are strong enough, belief stops being an open question. It becomes a loyalty test. And once belief becomes a loyalty test, truth becomes harder to reach.

System Breakdown

Authority does not need to control every decision directly.

It only needs to shape the frame through which decisions are made.

That frame usually forms in stages.

First, a claim is repeated until it feels familiar.

Then a trusted authority presents the claim as settled.

Then the group rewards agreement and punishes doubt.

Then the person begins filtering reality through the accepted belief.

Eventually, outside evidence feels threatening, not informative.

At that point, influence no longer has to argue with the person.

The person starts arguing with themselves on behalf of the influence.

This is the dangerous part.

A person may still feel independent while defending ideas they did not independently build.

They may still feel rational while rejecting evidence before examining it.

They may still feel morally certain while acting from a belief system that trained them what to notice, what to ignore, and who to trust.

Personal Evidence

I have experienced this directly.

When I was inside a high-control religious belief system, reality became elastic. Ideas that would have sounded impossible from the outside became normal inside the system.

The mind adapts.

Stories, symbols, authority figures, sacred language, group pressure, and fear of separation all work together. Over time, the question is no longer, “Does this match reality?”

The question becomes, “Does this match the accepted story?”

That shift matters.

Because once a system can stretch a person’s sense of reality, it can also shape their choices, relationships, fears, loyalties, and sense of self.

The same pattern can appear outside religion too.

It can happen in politics, media, marketing, online communities, abusive relationships, workplaces, influencer culture, and AI-mediated decision systems.

The content changes.

The system pattern does not.

Reframe

The problem is not belief itself.

Humans need beliefs. Beliefs help us organize meaning, make decisions, and act without re-evaluating everything from zero every day.

The problem begins when belief becomes closed to correction.

A healthy belief can be updated.

An unhealthy belief must be defended.

A healthy authority can be questioned.

An unhealthy authority treats questions as betrayal.

A healthy influence helps a person see more clearly.

An unhealthy influence narrows what the person is allowed to see.

That distinction is critical.

The goal is not to reject every authority or distrust every system.

The goal is to keep reality testable.

System Insight

Influence becomes dangerous when it separates people from direct reality.

That can happen through repetition, emotional pressure, identity attachment, social punishment, fear, or artificial certainty.

Once a person accepts a system’s frame, the system does not need to force every conclusion.

The frame produces the conclusions.

This is why authority is so powerful.

Authority tells people what counts as evidence.

Belief tells people what feels safe to accept.

Influence tells people where to place attention.

Together, they can form a closed loop:

authority defines reality, belief protects it, influence spreads it.

When that loop becomes stronger than observation, people can be guided into decisions that do not serve their wellbeing, their relationships, or the truth.

Application

This matters in everyday life.

Before accepting a claim, ask:

- Who benefits if I believe this?

- What happens if I question it?

- Is disagreement allowed without punishment?

- Am I being shown evidence, or only confidence?

- Does this belief make me more capable, or more dependent?

- Does this system expand reality, or shrink it?

These questions do not make a person cynical.

They make a person harder to control.

They also make AI systems safer.

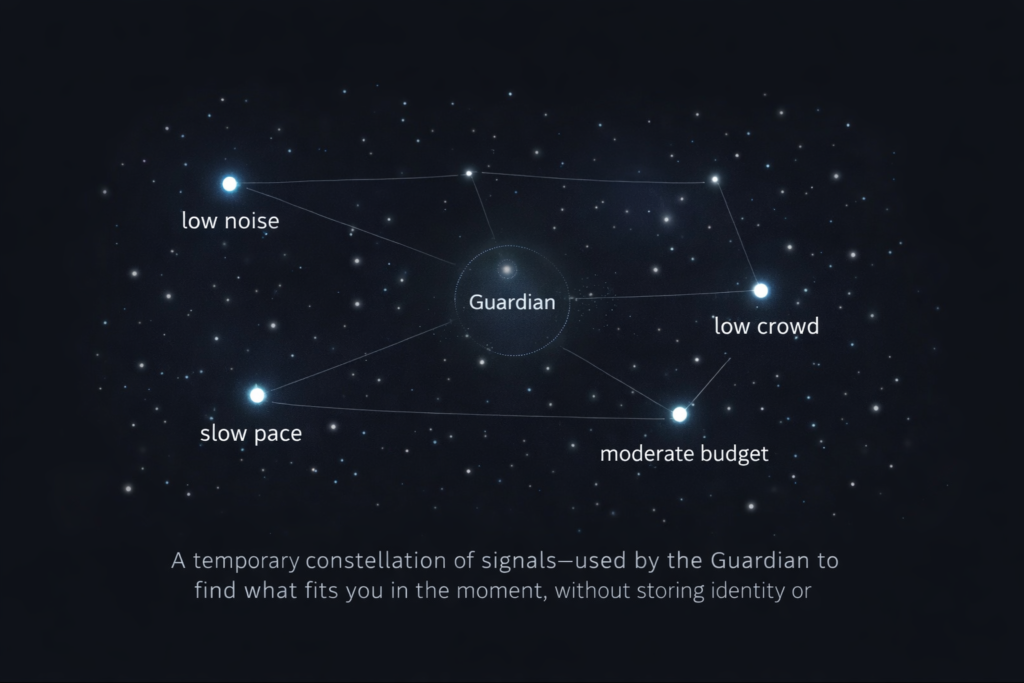

If AI is going to support human decision-making, it must not become another authority that quietly replaces judgment. It should help people compare evidence, notice pressure, separate signal from story, and return decision power to themselves.

A good system does not demand belief. It improves perception.

Key Insights

- People often hand off reality gradually, not all at once.

- Authority shapes what people treat as valid evidence.

- Belief can protect identity even when it blocks correction.

- Influence becomes dangerous when it narrows what people are allowed to notice.

- Healthy systems keep reality testable and return judgment to the person.

Reality is not lost only through ignorance.

Sometimes it is surrendered through trust.

That is why the structure around belief matters.

A human system should not ask people to abandon their own perception.

It should help them see more clearly.