A Human Systems View of Survival Responses and Compassion

Opening — The Assumption

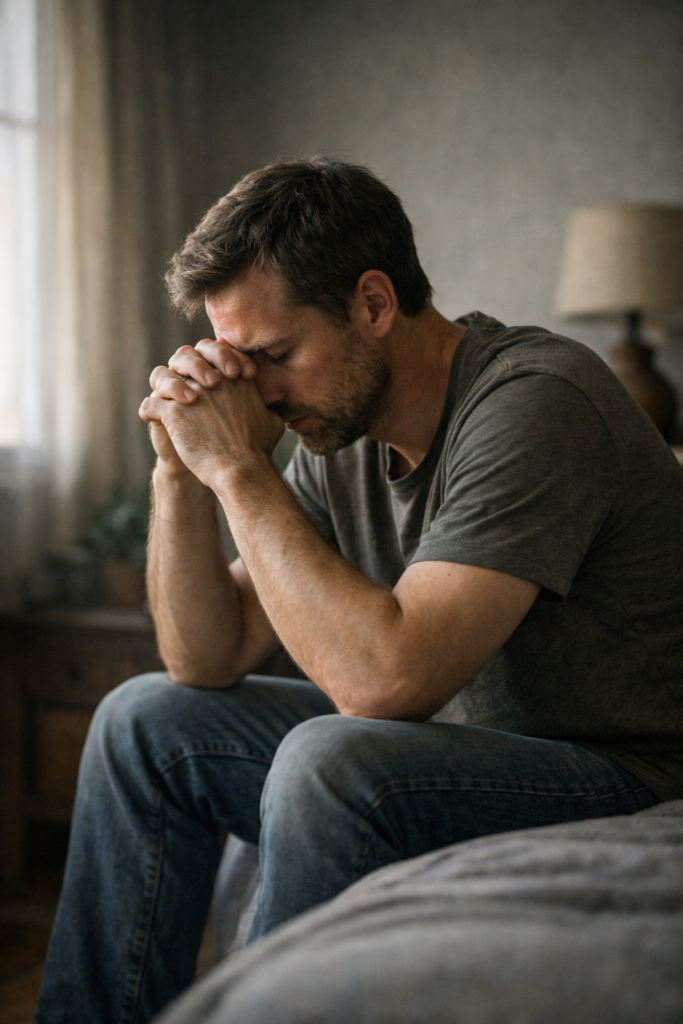

Most people believe that reactions like fear, anger, or withdrawal are signs of weakness, instability, or even moral failure.

We’re taught to judge these responses—both in ourselves and others.

Break the Assumption

What we label as “overreaction” is often a system doing exactly what it was designed to do.

Fight.

Flight.

Freeze.

These are not flaws. They are survival mechanisms—fast, automatic, and protective.

System Breakdown

The human nervous system prioritizes survival over accuracy.

When a threat is perceived—real or remembered—the system:

- Reduces time for reflection

- Increases speed of response

- Chooses protection over connection

This creates patterns such as:

- Fight → aggression, defensiveness

- Flight → avoidance, withdrawal

- Freeze → shutdown, dissociation

These responses are not chosen consciously.

They are triggered patterns based on past conditioning and stored signals.

Personal Evidence (Optional Anchor)

In lived experiences such as PTSD, these responses become more visible.

What looks like “irrational behavior” from the outside is often a system reacting to internal signals others cannot see.

Reframe

Instead of asking:

“Why is this person acting like this?”

A more accurate question is:

“What is this system trying to protect?”

This shift moves us from judgment → understanding.

System Insight

Behavior is not random.

It is:

Signal → Interpretation → Response

When the interpretation layer is shaped by past threat,

the response will prioritize safety—even when no danger is present.

Application

You can work with this system in practical ways:

- Pause before labeling behavior

- Look for the protective function behind reactions

- Reduce intensity before trying to reason

- Create environments where safety is felt, not forced

For yourself:

- Notice your default response pattern (fight, flight, freeze)

- Track when it activates

- Focus on regulation first, meaning second

Key Insights

- Survival responses are functional, not flawed

- The nervous system chooses speed over accuracy

- Behavior is driven by protection, not intention

- Understanding function leads to compassion

- Compassion creates space for better system outcomes

Closing

When we stop treating survival responses as problems to eliminate,

we gain the ability to work with the system instead of against it.

That’s where real compassion begins—not as an idea,

but as a direct understanding of how humans actually function.