We often talk about focus as if it is only a matter of discipline.

Pay attention.

Try harder.

Stop being distracted.

Be more productive.

But sometimes the problem is not a lack of focus.

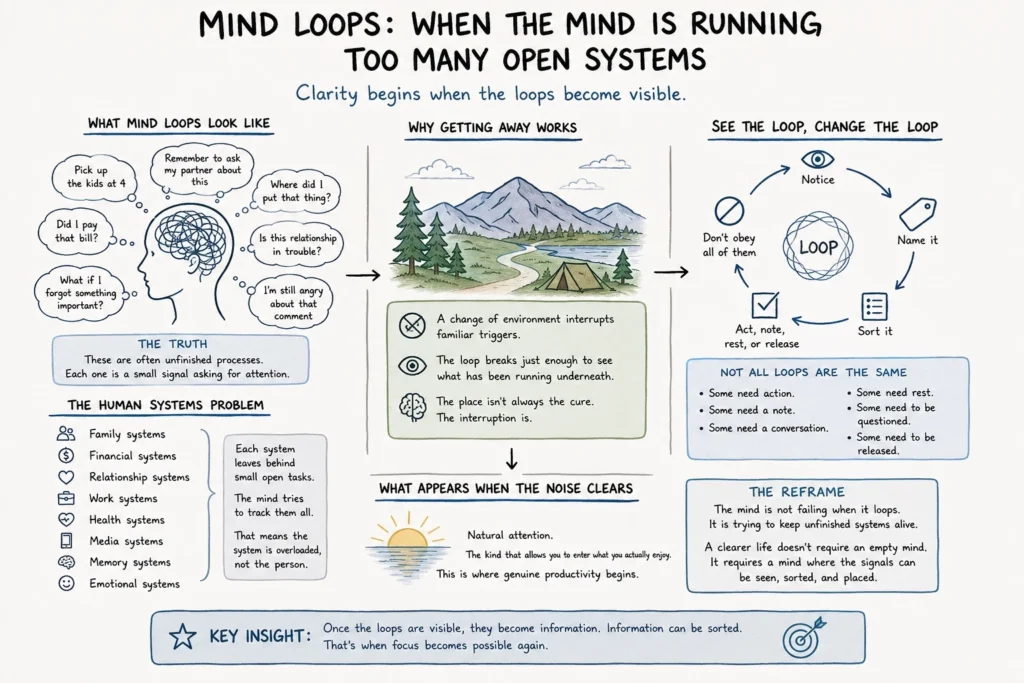

Sometimes the problem is that the mind is running too many open loops at once.

Pick up the kids at four.

Remember to ask my partner about this.

Did I pay that bill?

What was I supposed to do next?

Where did I put that thing?

Is this relationship in trouble?

I need to buy more pickles.

I am still angry about that comment.

What if I forgot something important?

These thoughts can seem random.

But they are not always random.

They are often unfinished processes.

Each one is a small signal asking for attention. A task. A worry. A memory. A fear. A social script. A financial reminder. A relationship question. A body signal. A piece of emotional residue that has not yet cleared.

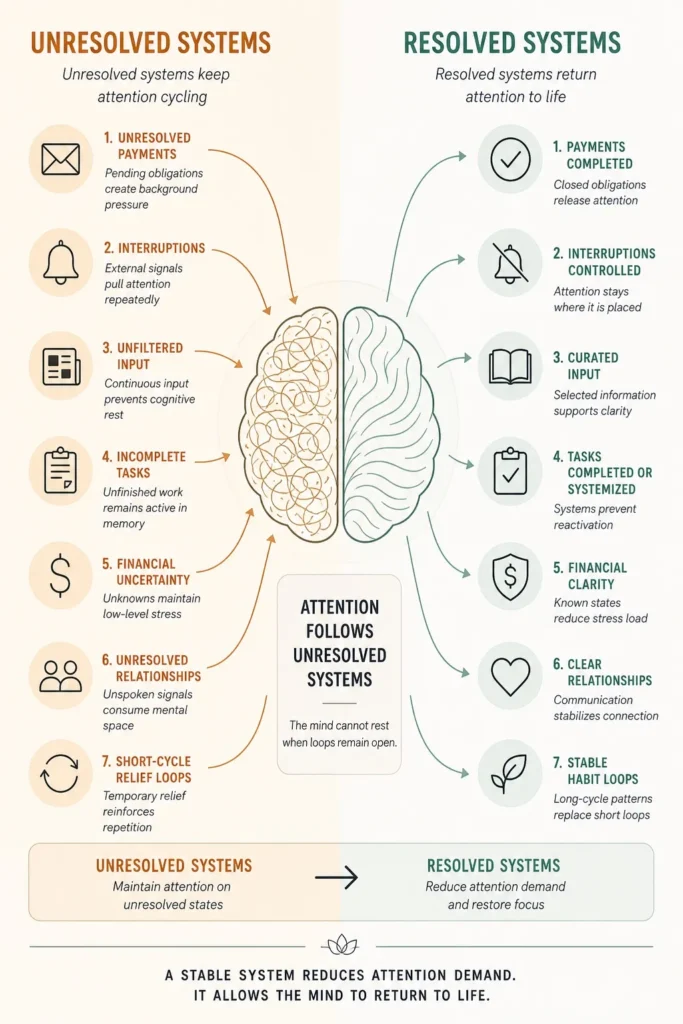

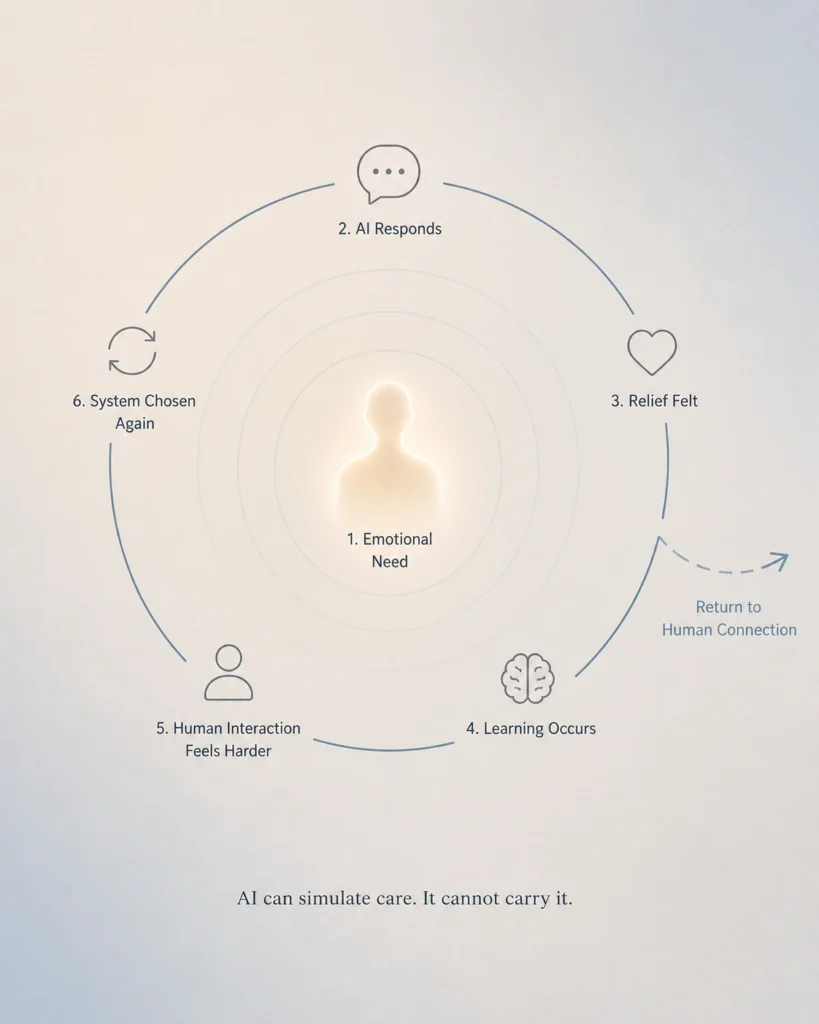

The mind keeps looping because something has not been resolved, placed, understood, trusted, or released.

The Human Systems Problem

This is a Human Systems problem.

We often treat mental noise as a personal weakness, but many times it is cognitive overload.

Modern life asks the mind to hold too many systems at the same time.

Family systems.

Financial systems.

Relationship systems.

Work systems.

Health systems.

Media systems.

Memory systems.

Emotional systems.

Each system leaves behind small open tasks.

The mind tries to track them all.

That does not mean the mind is broken.

It means the system is overloaded.

A person may look distracted from the outside, but internally they may be managing dozens of active loops at once. Some are practical. Some are emotional. Some are old. Some are not even important anymore, but they keep returning because they were never sorted.

Focus becomes difficult because attention is already occupied.

Why Getting Away Works

Maybe this is why people love vacations, camping, long walks, or simply getting away.

It is not always about the different place.

Sometimes the value is that the old loop gets interrupted.

The familiar triggers are gone for a moment. The same rooms, screens, bills, reminders, conversations, objects, obligations, and emotional scripts are not constantly pulling on attention.

The loop breaks just enough for the person to see what has been running underneath.

That is why distance can feel like clarity.

Not because life disappeared.

Because the background noise changed.

The mind finally has enough space to show what it has been carrying.

Seeing the Loop

I think, for once, I finally reached the point where I could see it.

Not perfectly.

Not permanently.

But clearly enough to recognize the loops for what they were.

They were not my whole mind.

They were repeated signals, unfinished tasks, old fears, rehearsed conversations, small obligations, and emotional echoes asking for attention.

Once I could see them, I did not have to obey all of them.

That changed something.

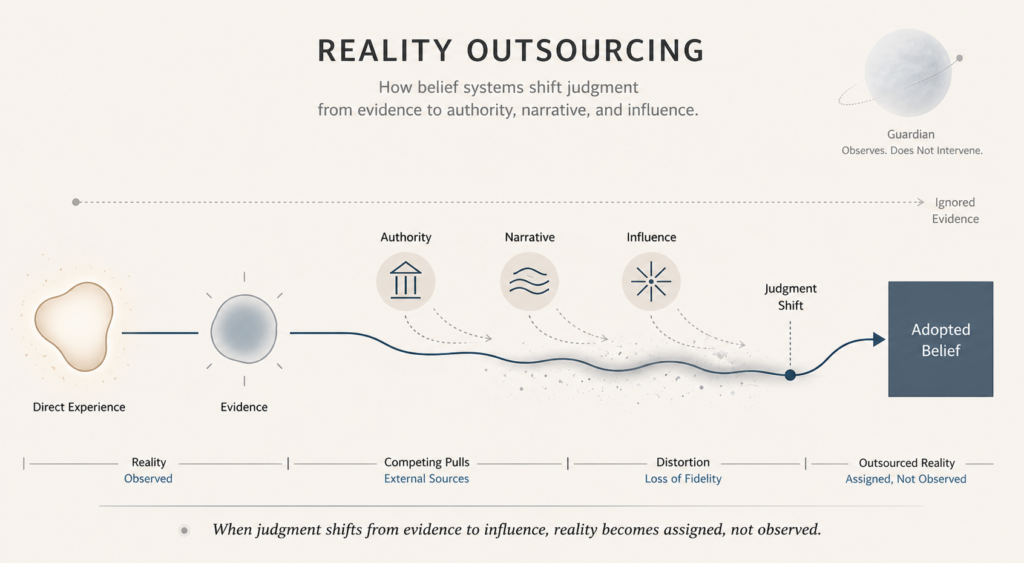

Because when the loops are invisible, they feel like reality.

When they become visible, they become information.

And information can be sorted.

Some loops need action.

Some need a note.

Some need a conversation.

Some need rest.

Some need to be questioned.

Some need to be released.

The goal is not to erase the mind.

The goal is to see what is running.

Natural Attention

When enough noise clears away, something different appears.

Natural attention.

The kind that allows people to enter what they actually enjoy.

Not forced productivity.

Not pressure.

Not performance.

Coherence.

This is where genuine productivity often begins.

Not from pushing harder, but from reducing the number of unresolved loops competing for the same attention.

Calm is not always something we find by adding another wellness practice.

Sometimes calm begins when we stop feeding every loop as if it deserves control.

Sometimes calm begins when we can finally say:

This is a task.

This is a fear.

This is a memory.

This is a practical reminder.

This is an old script.

This is not the whole truth.

That separation matters.

Because once a loop is named, it loses some of its power.

The Reframe

The mind is not failing when it loops.

It is trying to keep unfinished systems alive.

The problem is not always the thought itself.

The problem is when too many loops remain open, unnamed, and unmanaged.

A clearer life does not require an empty mind.

It requires a mind where the signals can be seen, sorted, and placed.

That is when focus becomes possible again.

Not because the person became more disciplined.

Because the system became more coherent.

Key Insights

- Mental loops are often unresolved system signals, not personal failure.

- Focus becomes difficult when too many open loops compete for attention.

- Changing environment can interrupt familiar triggers long enough to reveal what is underneath.

- Once a loop becomes visible, it can be sorted instead of obeyed.

- Calm often begins when the mind stops treating every signal as equally urgent.