Opening

The AI and human connection gap is becoming more visible as people turn to artificial intelligence for conversation, emotional support, and clarity.

Not because they prefer machines.

Because access to consistent, non-judgmental human connection is limited, expensive, or unreliable.

AI didn’t create this shift.

It revealed a system that was already strained.

Break the Assumption

This is where the AI and human connection gap becomes measurable, not theoretical.

Assumption:

AI is replacing human connection.

Reality:

AI is filling a gap where human systems are failing to meet demand.

The concern is not that AI exists.

The concern is what happens when it becomes the primary source of feedback.

System Breakdown

System Flow

Reduced human connection

↓

Increased AI interaction

↓

Consistent, low-friction responses

↓

Reduced exposure to disagreement or correction

↓

Stabilized internal narratives (accurate or not)

↓

Decreased need to engage with humans

↓

Loop reinforces itself

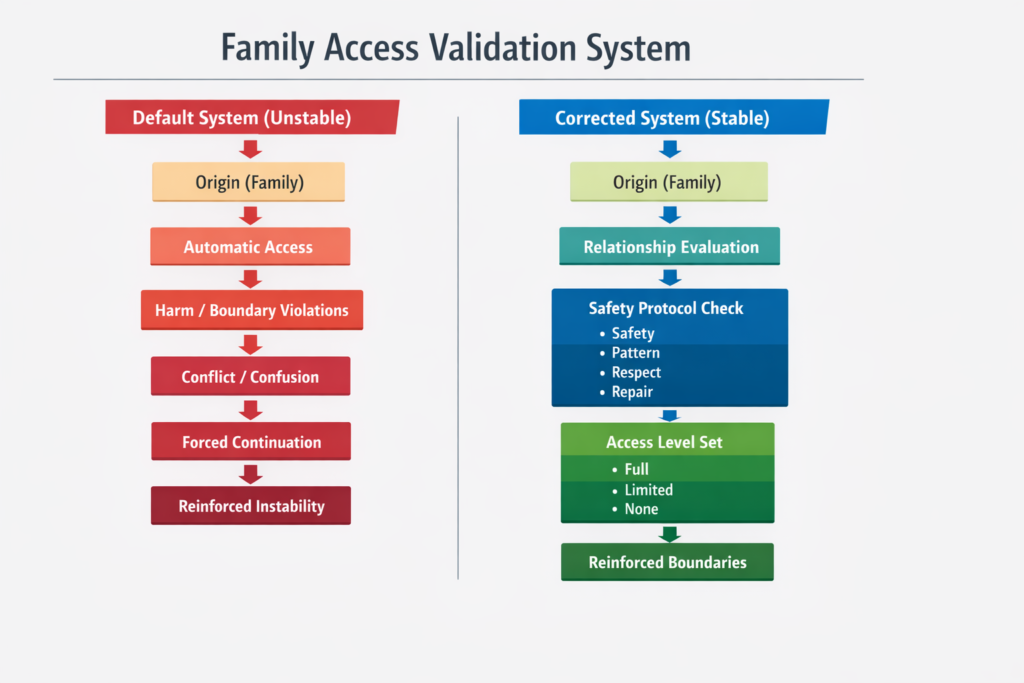

What Makes This System Different

Human relationships include:

- Misunderstanding

- Friction

- Repair

- Adjustment

These are not flaws.

They are calibration mechanisms.

AI interaction often removes:

- social risk

- emotional cost

- unpredictability

This creates a smoother experience—but a less corrective one.

Personal Evidence

During and after COVID, access to mental health support in my environment was severely limited. The system was strained to the point where reliable human support was not consistently available. My environment was very problematic.

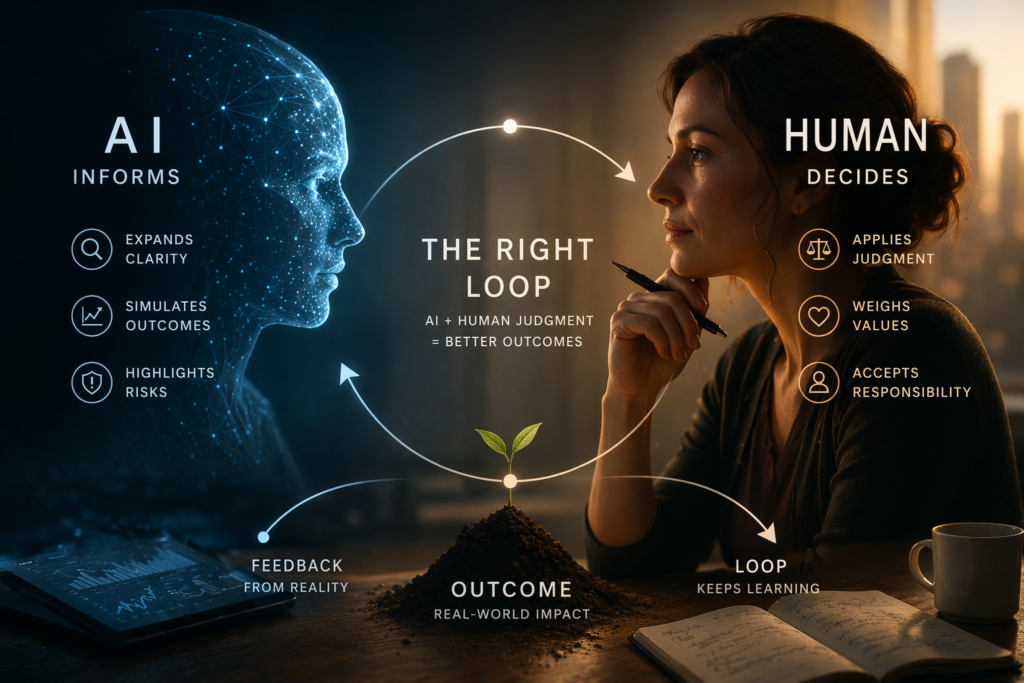

AI became a tool I used—not as a replacement for human connection—but as a way to process context and identify available options.

It did not tell me what to do.

It helped me see what I could do.

For example:

- Recognizing that I could function in Spanish

- Identifying that Spain could provide a more stable environment

- Understanding that relocation was a viable path, not an abstract idea

This shifted the system from:

- feeling constrained and reactive

to:

- seeing multiple paths and making deliberate choices

The outcome was not dependency.

It was increased agency through expanded visibility.

Reframe

The issue is not AI.

The issue is unbalanced input systems.

Humans require:

- reflection (AI can provide this)

- correction (humans provide this)

- shared experience (only humans provide this)

When one replaces the others, the system becomes unstable.

System Insight

Any system that provides validation without correction will eventually distort perception.

If the AI and human connection gap continues to widen, feedback systems will become increasingly unbalanced.

AI can:

- reflect

- organize

- clarify

It should not become:

- the sole validator

- the primary emotional reference

- the replacement for human connection

Application

1. Separate Roles Clearly

Use AI for:

- structuring thoughts

- exploring ideas

- reducing ambiguity

Use humans for:

- emotional calibration

- disagreement

- shared reality

2. Monitor Input Balance

If most interaction is:

- predictable

- affirming

- frictionless

Then the system is becoming closed-loop.

Introduce:

- differing perspectives

- real conversations

- environments with uncertainty

3. Reintroduce Friction Intentionally

Friction is not failure.

It is how humans:

- adjust beliefs

- refine communication

- maintain alignment with reality

Avoiding friction entirely leads to internal drift.

4. Maintain Autonomy

AI should support:

- decision clarity

Not:

- decision replacement

The moment AI becomes the primary source of direction, autonomy weakens.

Key Insights

- AI is not replacing human connection; it is exposing where it is insufficient

- Validation without correction creates unstable perception systems

- Human friction is a necessary calibration mechanism

- Balanced input systems are required for stable cognition

- AI is most effective as a support layer, not a replacement layer

- Expanding visible options increases human agency without reducing autonomy

Closing

The future is not human or AI.

It is how well the two are balanced within a system that preserves human stability.

AI can support clarity.

Only humans can sustain shared reality.

The system fails when those roles are confused.