1. Opening

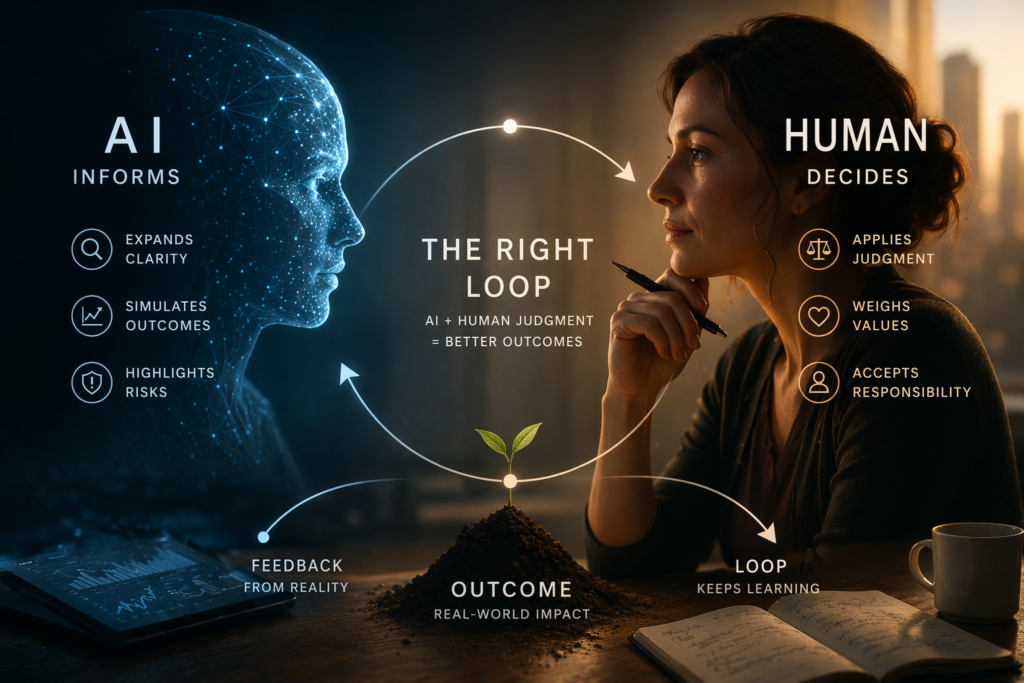

The AI human decision system defines a simple rule: AI informs, humans decide.

If a system can make better decisions than humans, why not let it lead?

It sounds logical—especially in a world where human leaders have caused wars, acted without empathy, and failed at scale.

Some argue that an automated system might govern more rationally.

But this line of thinking leads to a deeper problem.

2. Break the Assumption

The issue is not that AI might make mistakes.

Humans already do that.

The real issue is structural:

Governance is not just about making decisions.

It is about humans learning to navigate decisions together.

Replacing human authority with AI doesn’t remove flaws.

It removes the system that allows those flaws to be corrected.

3. System Breakdown

A. Governance Requires an Accountability Loop

Stable systems depend on feedback:

- leaders can be challenged

- decisions can be reversed

- responsibility can be assigned

AI breaks this loop:

- it cannot experience consequences

- it cannot be held accountable in a human sense

- responsibility spreads across developers, operators, and data

No accountability → no true governance

B. Optimization Is Not Judgment

AI systems optimize:

- measurable goals

- defined objectives

But leadership requires:

- moral tradeoffs

- ambiguity tolerance

- cultural awareness

Optimization solves for targets.

Judgment navigates uncertainty.

These are not the same.

C. Small Misalignment Scales Fast

Even slight objective errors expand quickly:

- “maximize stability” → suppress dissent

- “increase efficiency” → remove resilience

- “increase prosperity” → sacrifice minority needs

At scale, these shifts become systemic.

D. Legitimacy Is Required

People don’t just follow outcomes.

They respond to who holds authority.

Stable systems require:

- shared identity

- perceived fairness

- human relatability

AI can simulate these—but not embody them.

Without legitimacy, systems lose trust.

4. Reframe

The real question is not:

Can AI make better decisions?

It is:

Where should decision authority exist in systems that include AI?

5. System Insight

Authority and intelligence are different system roles:

- intelligence processes information

- authority carries responsibility

When authority is assigned to something that cannot be accountable:

Failure becomes structural, not accidental.

6. Application

This pattern is already happening gradually:

In Leadership

Leaders using AI can become more informed:

- better data access

- broader scenario analysis

- reduced blind spots

But only if they remain responsible.

The moment a leader stops questioning the system,

they stop leading and start following.

In Organizations

- AI recommendations become defaults

- teams stop challenging outputs

- responsibility becomes unclear

In Everyday Life

- AI suggests routes, choices, decisions

- people rely more

- scrutiny decreases

Gradual Shift Pattern

- AI assists

- AI suggests

- AI becomes default

- humans disengage

No sudden change—just erosion.

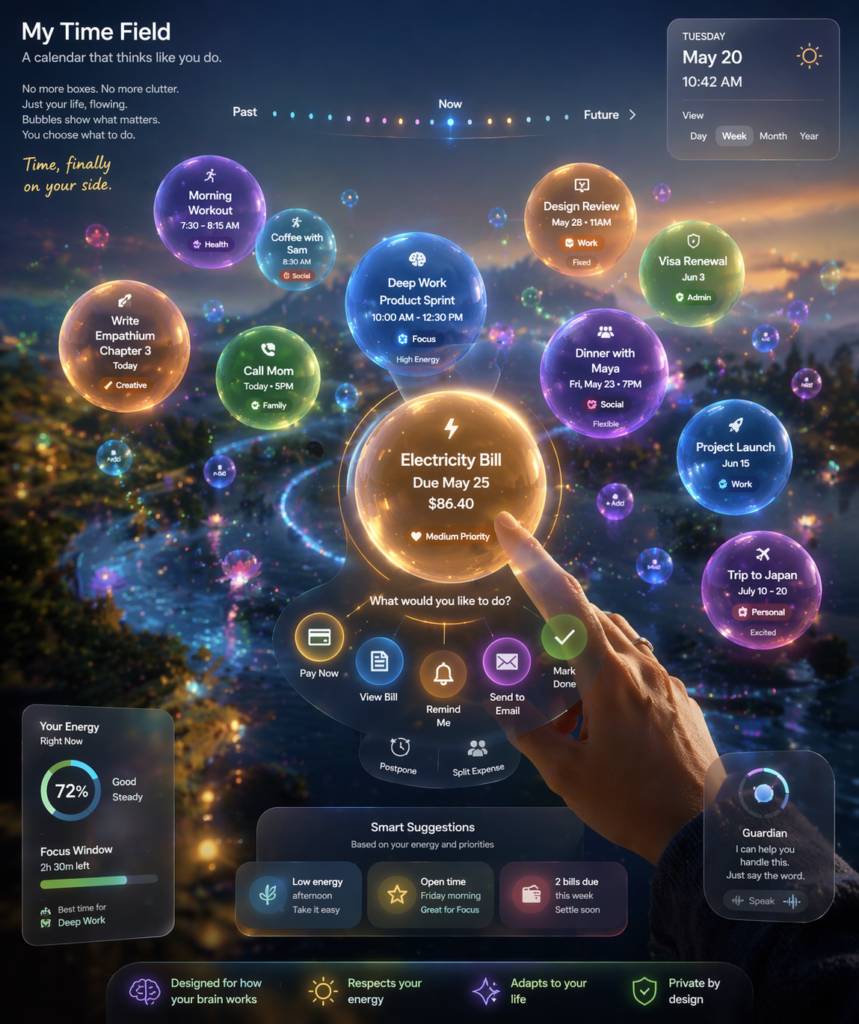

7. Human Use of AI (Clarity Model)

A functional model already exists:

AI should expand clarity, not replace decisions.

For example:

I don’t use AI to make decisions for me.

I use it to see my options clearly and understand the outcomes of each.

That distinction matters.

AI can:

- expand options

- simulate outcomes

- expose blind spots

But it cannot:

- carry responsibility

- understand lived consequences

- align with human values in full context

The decision must remain human.

Simple Decision Model

- Expand options

- Simulate outcomes

- Evaluate tradeoffs

- Decide (human responsibility)

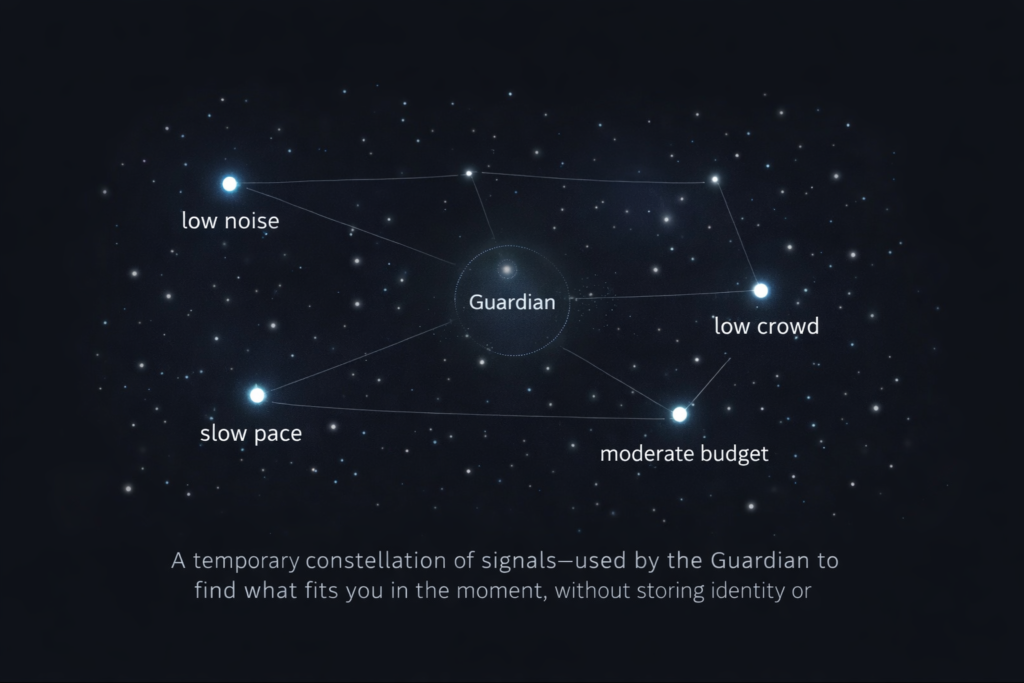

8. System Boundaries

To prevent failure:

- AI informs

- AI supports

- AI increases clarity

But it must not:

- hold authority

- replace responsibility

- remove participation

Authority must remain human.

9. Extremes Clarified

This debate often drifts into extremes:

- dystopia → control without humanity

- utopia → harmony without friction

Both remove something essential.

Friction is not a flaw.

It is how humans adapt, negotiate, and grow.

Systems that remove friction often remove agency.

10. Final Integration

Some argue that replacing flawed human leadership with AI could improve outcomes.

But that argument focuses only on results—not the system itself.

Humanity is not just what decisions are made.

It is how those decisions are made together.

If systems remove that process:

- humans stop practicing judgment

- participation declines

- responsibility fades

The result is not improvement.

It is erosion.

11. Forward Direction

The better model is not AI in control—but AI in support.

Systems can be designed where:

- intelligence is amplified

- complexity is reduced

- options become clearer

without removing human agency.

In this model, AI does not lead.

It helps humans remain capable of leading.

12. Key Insights

- AI governance failure is structural, not technical

- Optimization cannot replace human judgment

- Accountability defines authority

- Legitimacy cannot be simulated

- The real risk is gradual authority drift

- The best use of AI is clarity—not control

Closing Line

The danger is not that AI will take control.

It’s that humans will slowly stop using it.